|

If you have two very distinct query patterns, maybe it would be better creating two (or more) distinct stored procs, gated by an entry one based on conditions, each one with its own pattern (inclusion or removal of the nullable parameter) and letting the optimizer do its work in peace.įorcing reparsing and rebuilding plans while being hit by continuous transactions might not be the most sensible choice. Pretty low-level under-the-hood stuff and unpredictable, IMHO.

To get an insight on compile times, please take a look at:īasically, use SET STATISTICS TIME ON before your query to get console messages on time spent in compilation and execution.Īs a side note, not your question, worrying about compilation times might not be the most productive course of action. But I can pretty much guarantee that if you try to use branching where clause logic to create two completely different queries and then give it any significant amount of data you will have problems, but making two distinct queries works as well as if both of those queries lived in different stored procedures and you figured out which procedure to call at the web layer (also possible). Can you still use this method to create a query for each scenario? Sure, if you want. If you go more complex like you suggested you might be dynamic SQL is probably the better option. WHERE customerid = should work fantastic if you want to either pull all the data or a specific set of data. Set = (select customerid from dbo.table2) You can perform this with something along these lines. What you want to do is create a branching logic such that there are two distinct queries each which can have their own execution plan. You want to do whatever you can to not put your branching logic inside of the where clause that just beats up the engine trying to figure out how to possibly create a suitable plan for both of your scenarios which it cannot do and will just create performance issues. You can do what you are asking without the option recompile all that does it force it to generate a new plan every time.

In general I am seeing that recompiling is consistently faster but there are lots of post stating that this means the execution plans can come out as being far less than optimal.Īny guidance on measuring these overheads or any issues I should investigate before implementing this more widely would be gratefully received. There is however a cost to recompiling, and I'm looking to trace this overhead so I can make sensible decisions as to which of our queries to format in this way. OR EXISTS ( SELECT TOP (1) 1 A FROM dbo.TestTable2 T2 WHERE T1.ID = T2.ID AND T2.TextValue = (RECOMPILE) Īs you can see from the query plan below with the recompile table 2 is effectively removed from the execution.

OR EXISTS ( SELECT TOP (1) 1 A FROM dbo.TestTable2 T2 WHERE T1.ID = T2.ID AND T2.TextValue = (RECOMPILE) We are currently looking at recompiling the query so that the joins to the secondary table are only made when required. This is greatly over simplified as we would have multiple possible conditions, linking to multiple tables. Sample data: DROP TABLE IF EXISTS dbo.TestTable1 ĬREATE TABLE dbo.TestTable1 (ID INT NOT NULL PRIMARY KEY CLUSTERED, TextValue NVARCHAR(255) NULL) ĬREATE TABLE dbo.TestTable2 (ID INT NOT NULL PRIMARY KEY CLUSTERED, TextValue NVARCHAR(255) NULL) If customer is not null return their sales else return all sales. We have several stored procedures where we select from table 1 based on a nullable parameter in table 2.

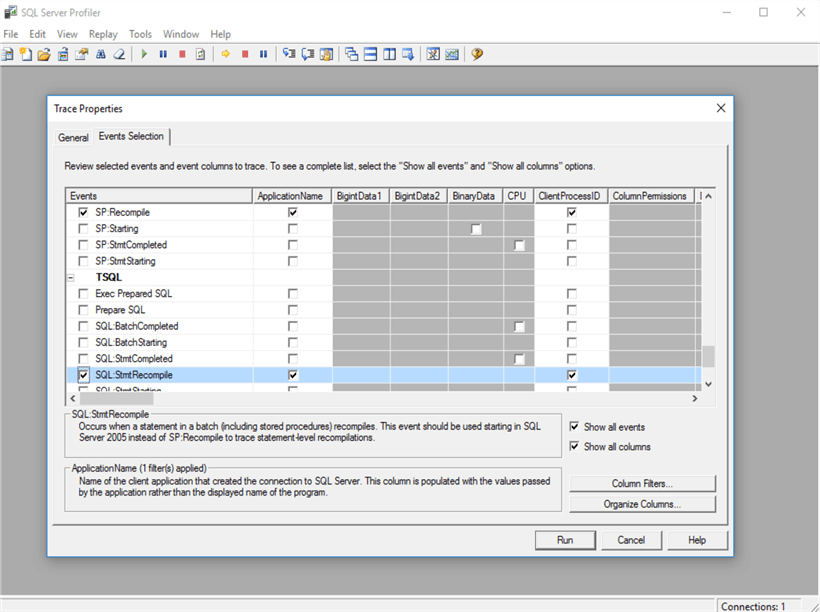

I've been looking to see if there is any way to evaluate how long it takes SQL Server to recompile the execution plan for a query.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed